It only took twenty four hours after Google’s Gemini was publicly released for someone to notice that chats were being publicly displayed in Google’s search results. Google quickly responded to what appeared to be a leak. The reason how this happened is quite surprising and not as sinister as it first appears.

@shemiadhikarath tweeted:

“A few hours after the launch of @Google Gemini, search engines like Bing have indexed public conversations from Gemini.”

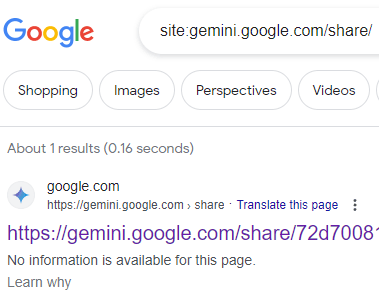

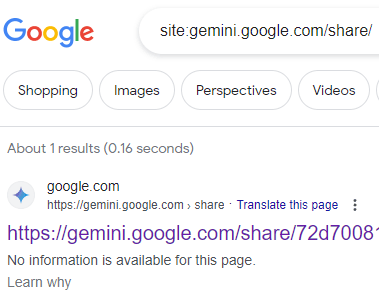

They posted a screenshot of the site search of gemini.google.com/share/

But if you look at the screenshot, you’ll see that there’s a message that says, “We would like to show you a description here but the site won’t allow us.”

By early morning on Tuesday February 13th the Google Gemini chats began dropping off of Google search results, Google was only showing three search results. By the afternoon the number of leaked Gemini chats showing in the search results had dwindled to just one search result.

How Did Gemini Chat Pages Get Created?

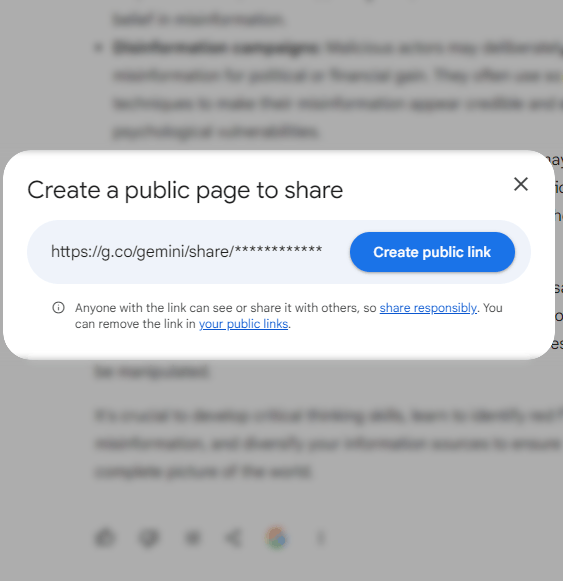

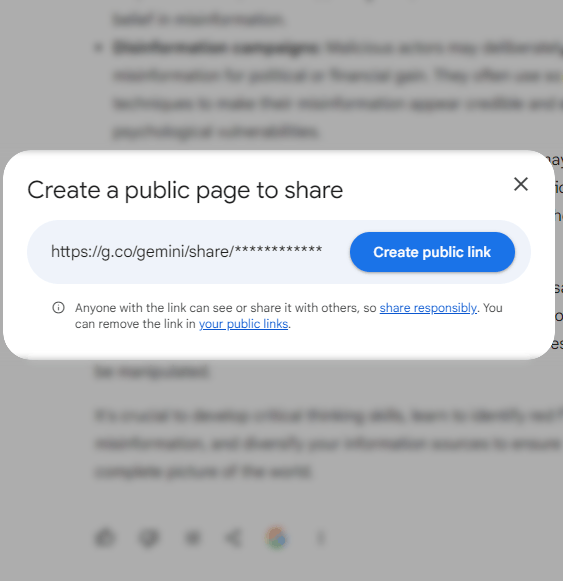

Gemini offers a way to create a link to a publicly viewable version of a private chat.

Google does not automatically create webpages out of private chats. Users create the chat pages through a link at the bottom of each chat.

Screenshot Of How To Create a Shared Chat Page

Why Did Gemini Chat Pages Get Indexed?

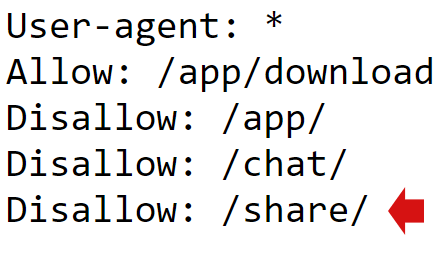

The obvious reason for why the chat pages were crawled and indexed is because Google forgot to put a robots.txt in the root of the Gemini subdomain, (gemini.google.com).

A robots.txt file is a document for controlling crawler activity on websites. A publisher can block specific crawlers by using commands standardized in the Robots.txt Protocol.

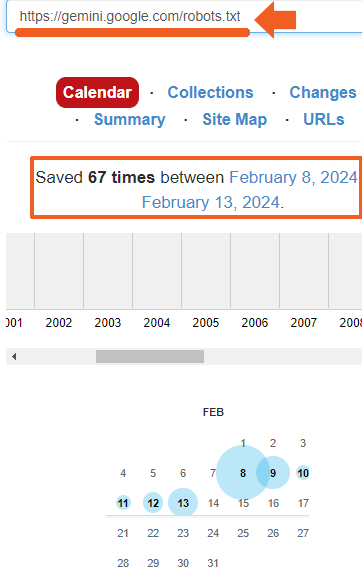

I checked the robots.txt at 4:19 AM on February 13th and saw that one was in place:

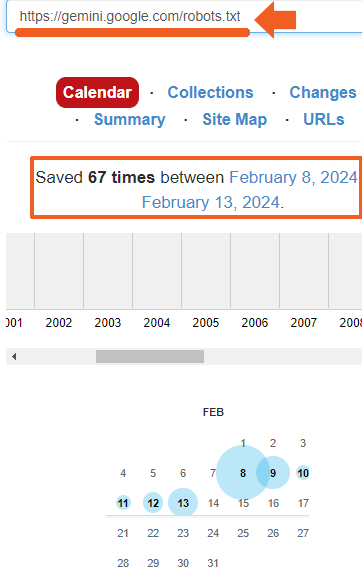

I next checked the Internet Archive to see how long the robots.txt file has been in place and discovered that it was there since at least February 8th, the day that the Gemini Apps were announced.

That means that the obvious reason for why the chat pages were crawled is not the correct reason, it’s just the most obvious reason.

Although the Google Gemini subdomain had a robots.txt that blocked web crawlers from both Bing and Google, how did they end up crawling those pages and indexing them?

Two Ways Private Chat Pages Discovered And Indexed

- There may be a public link somewhere.

- Less likely but maybe possible is that they were discovered through browsing history linked from cookies.

It’s likelier that there’s a public links. But if there’s a public link then why did Google start dropping chat pages altogether? Did Google create an internal rule for the search crawler to exclude webpages from the /share/ folder from the search index, even if they’re publicly linked?

Insights Into How Bing and Google Search Index Content

Now here’s the really interesting part for all the search geeks interested in how Google and Bing index content.

The Microsoft Bing search index responded to the Gemini content differently from how Google search did. While Google was still showing three search results in the early morning of February 13th, Bing was only showing one result from the subdomain. There was a seemingly random quality to what was indexed and how much of it.

Why Did Gemini Chat Pages Leak?

Here are the known facts: Google had a robots.txt in place since the February 8th. Both Google and Bing indexed pages from the gemini.google.com subdomain. Google indexed the content regardless of the robots.txt and then began dumping them.

- Does Googlebot have a different instructions for indexing content on Google subdomains?

- Does Googlebot routinely crawl and index content that is blocked by robots.txt and then subsequently drop it?

- Was the leaked data linked from somewhere that is crawlable by bots, causing the blocked content to be crawled and indexed?

Content that is blocked by Robots.txt can still be discovered, crawled and end up in the search index and ranked in the SERPs or at least through a site:search. I think this may be the case.

But if that’s the case, why did the search results begin to drop off?

If the reason for the crawling and indexing was because those private chats were linked from somewhere, was the source of the links removed?

The big question is, where are those links? Could it be related to annotations by quality raters that unintentionally leaked onto the Internet?